Most Probable Distribution¶

Applications of Most Probable Distribution

Application of most probable distribution is discussed in Boltzmann Statistics.

In Boltzman distribution, one of the key ingredients is to calculate the most probable distribution. First things first, the most probable distribution is indicating the distribution of energy (abbr. distribution) that is most probable. On the other hand, we also talked about the probability of the microstates. It is crucial to understand the difference between distribution and microstates.

Microstates describes the configurations of the system which is the most detailed view of the system in statistical physics. A distribution of the system describes the number of particles on each energy levels of the particle.

Why would we choose most probable distribution?

First of all, tt can be derived easily.

The most probable distribution in Boltzman theory is extremely sharp for large number of particles.

Assuming an actual distribution of the distribution, \(\rho(\{\epsilon_i:a_i\})\) where \(\{\epsilon_i:a_i\}\) is a distribution of energies. The observable \(\langle\mathscr O\rangle\) can be calculated using the following integral

If \(\rho(\{\epsilon_i:a_i\})\) is a delta function distribution, only the most probable distribution, \(\rho_{\text{most probable}}\) is need to calculate observables.

This can be demonstrated with numerical simulations.

Other Possible Distributions

Statistically speaking, energy distribution is not the only available distribution we have. We look into the energy distribution because we would like to derive something easy to use for physics using the fundamental assumption of the probabilities of the microstates.

There are other granular distributions. In Ising model,

- distribution of the magnet directions, i.e., the number of magnets pointing upward and the number of magnets pointing downward, for each microstate,

- distribution of the total energies, i.e., the number of microstates with a specific energy.

Equal A Prior Probability¶

As mentioned in What is Statistical Mechanics, a theory of the distribution of the microstates shall be useful for our predictions of the macroscopic observables.

Equal A Prior Probability

For systems with enormous number of particles, we observe their macroscopic properties such as energies, pressure in experiments. But we have very limited information about the internal structure. The principle proposed by Boltzmann is that all these different possible configurations of microstructure are equally distributed, a.k.a., principle of equal a prior probabilities.

It should be noted that all the possible states to be used for the probabilities should produce the observables we already know. For example, the total energy of the system, \(E\), and the volume of the gass, \(V\) should be consistent for each of the microstates.

An Example of Calculations¶

This is Only an Example for the Calculation

This example is not exactly an statistical physics problem since we do not have enough particles to make it statistically significant. For example, we use Sterling’s approximation but this doesn’t hold in this case.

The equal a-priori principle can be illustrated using a two-magnet system. For simplicity, we will ignore the interactions between the magnets. In the example, we use \(a_i\) to denote the number spins on the different spin states (up or down),

With an external magnetic field, the energy of the system is determined by

where \(s_i=\pm 1\).

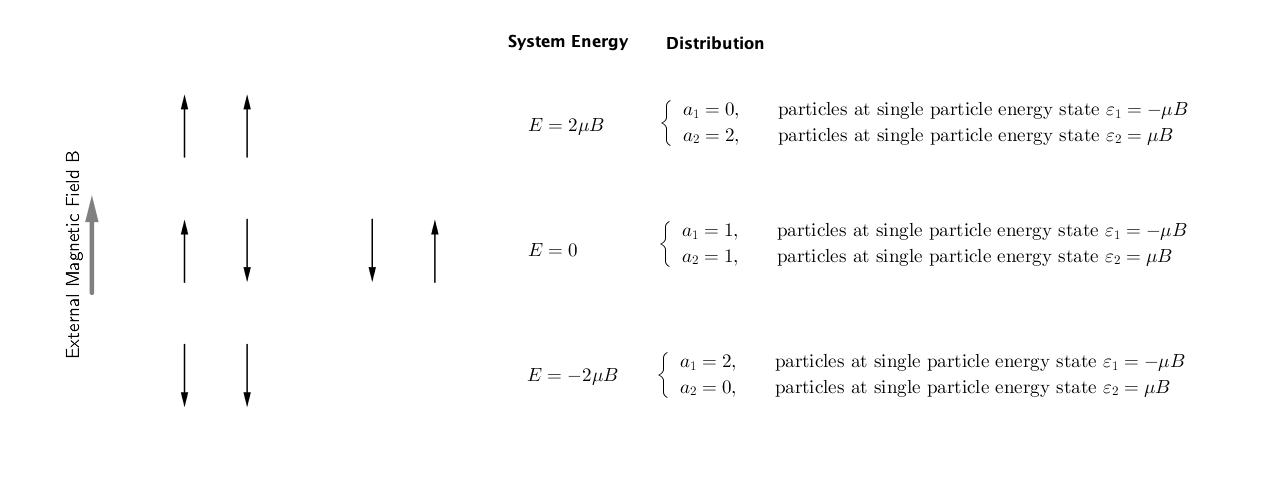

Fig. 9 A simple system of 2 magnets in an external magnetic field. The external magnetic field is pointing upwards. The energy of the system is labelled as \(E\) and the distributions are labelled on the right. In principle, we could also have multiple possible distributions for the same energy of the whole system. In our case, it is simply a coincidence that we only have on distribution corresponding to each energy of the system.

Extended Caption of Fig. 9

We have the following possible energy distributions.

which has total energy of \(2\mu B\) and number of microstates \(\Omega = 1\), and

which has total energy of \(0\) and number of microstates \(\Omega = 2\), and

which has total energy of \(-2\mu B\) and number of microstates \(\Omega = 1\).

Each of the possible configuration of the the two magnets is considered as a microstate. That being said, the equal a-prior principle tells us that the probabilities of the different configurations are the same, for each total energy, if we our restricting observable is energy. For example, the two states for total energy \(E=0\) are have the same probability. This is an effort of least information assumption, a.k.a., the Bernoulli’s principle of indifference [Buck2015] [Yang2012].

In principle, we could calculate all observables of the system using this assumption. However, it will be extremely difficult to tranverse all the possible states (How Expensive is it to Calculate the Distributions).

Probabilities of Distributions¶

Suppose we have an equalibrium system with energy 0. In above example of the 2-magnet system, we only have one distribution and two microstates. We do not need more granular information about the microstates. As we include more magnets, each total energy corresponds to multiple energy distributions. For example, the number of microstates associated with a energy distribution in an Ising model could be huge.

The number of microstates associated with each macrostates can be derived theoretically. Those results are presented in most textbooks. The derivation involves the following steps.

- Find the total number of macrostates (single particle energy distributions), \(\Omega\).

- Take the log of the distribution and find the maximum using Lagrange multipler method.

- The most probable distribution should follow the Boltzman distribution of exponential distribution based on energy levels.

The Magic of Equal a Priori Probabilities¶

Though assuming the least knowledge of the distribution of the microstates, we are still able to predict the observables. There exist several magical processes in this theory.

The first magic is the so-called more is different. Given thorough knowledge of a single particle, we still find phenomena unexplained by the single-particle property.

How could Equal a Priori help?

Equal a priori indicates a homogeneous distribution. How would a homogeneous distribution of microstates be useful to form complex materials?

The reason behind it is the energy degeneracies of the states. Some microstates lead to the same energy, as shown in Fig. 9. Even for the same microstates, the distribution of energies will be different with different interactions applied.

Different degeneracies lead to different observable systems.

Why is Temperature Relevant?

In this formalism, we do not consider the temperature. In the deriveation, we used the Lagrange multiplier method which introduced an equivalent of the temperature.

Temperature is a punishment of our energy distribution. It sets the level of base energy.

How Expensive is it to Calculate the Distributions¶

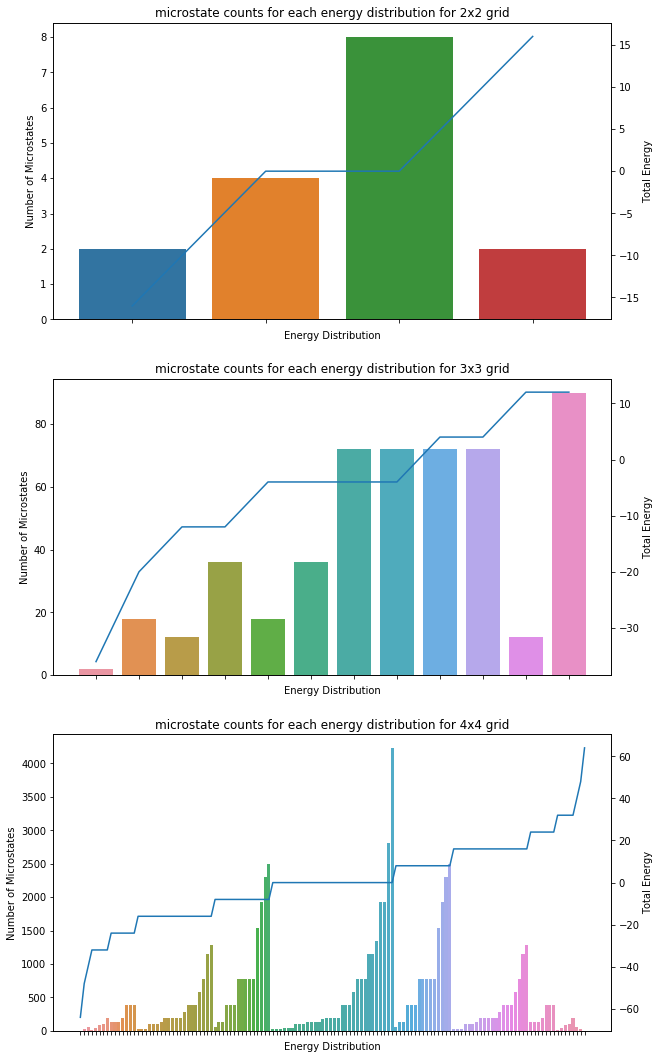

It is very expensive to iterate through all the possible microstates to simulate large systems. To demonstrate this, I use Python to iterate through all the possible states in an Ising model, without any observables constraints. All the results can be dervied theoretically. However we will only show the numerical results to help us building up some inutitions and to understand how expensive it is to iterate through all the possible states.

Ising Model with Self-interactions¶

For example, we could calculate all the configurations and energies of the configurations using brute force. A simple estimation of the number of microstates is \(\Omega = 2^N\), where \(N\) is the number of spins. The number grows exponentially with the number of spins.

Fig. 10 Microstate counts of energy distribution. The bars shows the number of microstates with the specific energy distributions, which indicates the probability of the corresponding distributions given the equal-a-priori principle. The line shows the corresponding energy of the distribution.

In reality, these calculations becomes really hard when the number of particles gets large. For benchmark purpose, I did the calculations in serial on a MacBook Pro (15-inch, 2018) with 2.2 GHz Intel Core i7 and 16 GB 2400 MHz DDR4. It takes about 20min to work out the 5 by 5 grid. The calculation time is scaling up as \(2^N\) where \(N\) is the total number of particles, if we do not implement any parallel computations or other tricks.

References¶

| [Buck2015] | Buck, B., & Merchant, A. C. (2015). Probabilistic Foundations of Statistical Mechanics: A Bayesian Approach. |

| [Yang2012] | Yang X. Yang Xian. In: Thermal and Statistical Physics (PHYS20352) [Internet]. [cited 17 Oct 2021]. Available: https://theory.physics.manchester.ac.uk/~xian/thermal/chap3.pdf |